Understanding Nudify.ai: The Growing Concern Of AI Undress Apps

Have you, perhaps, heard whispers about something called nudify.ai? It's a topic that's been gaining a lot of attention lately, and for some very concerning reasons. This isn't just about new technology; it's about the real-world impact on people, their privacy, and their well-being. We're talking about artificial intelligence tools that, quite frankly, are causing a lot of trouble.

These applications, sometimes known as "undress apps" or even "deepfake" programs, have emerged rather quickly. They use advanced AI to digitally take clothing off images of people. What this means, in a way, is that someone can upload a regular picture, and the software will make it look like the person in the photo is nude, all without their permission. It's a bit like a digital trick, but with very serious consequences.

This blog post aims to help you get a better grasp of what nudify.ai apps are all about. We'll look at how they operate, the real risks they bring, and, arguably, what you can do to keep yourself and your loved ones safe from their harmful effects. It's a conversation we really need to have, especially with how fast these things are spreading.

Table of Contents

- What is Nudify.ai and How Does It Work?

- The Alarming Rise of AI Undress Apps

- The Real-World Impact on Victims

- Legal and Ethical Questions

- Protecting Yourself and Your Loved Ones

- A Call for Responsible AI Development

- Frequently Asked Questions About Nudify.ai

What is Nudify.ai and How Does It Work?

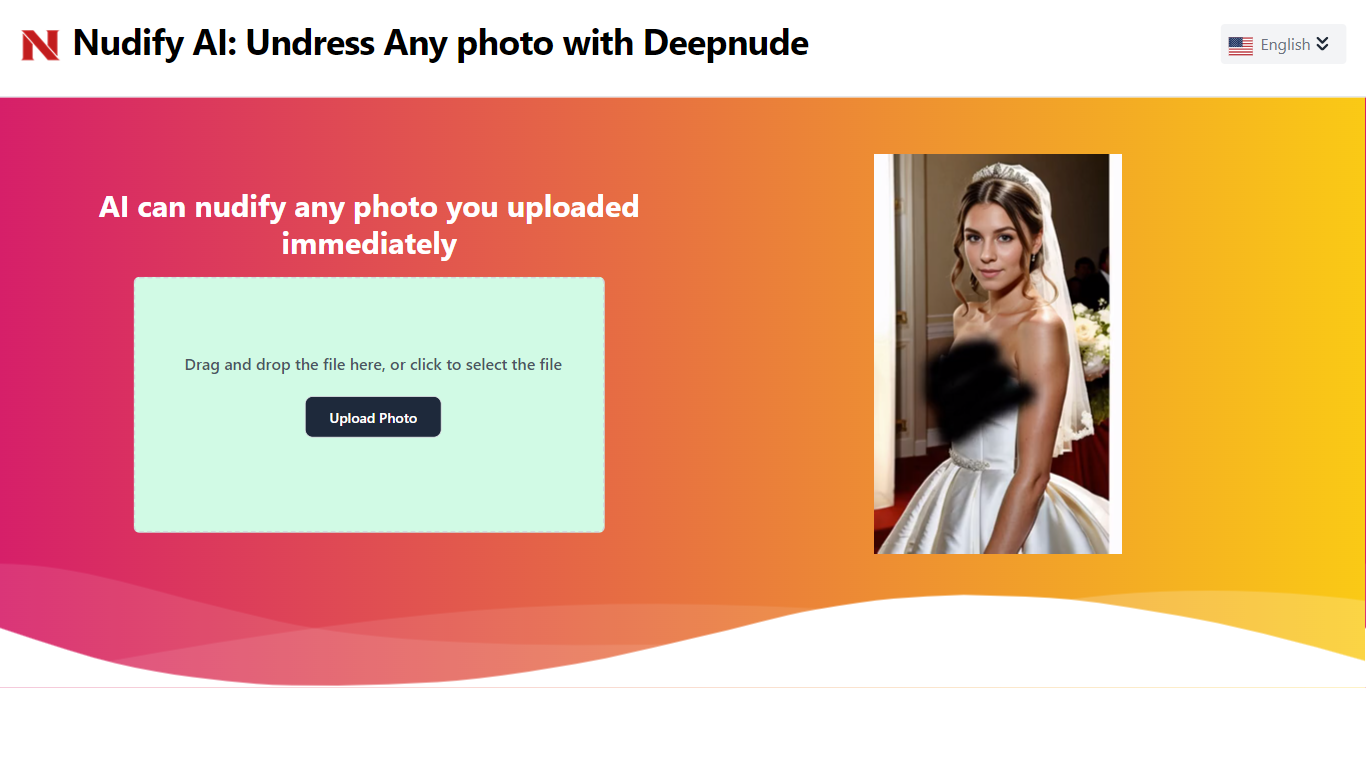

Nudify.ai, or "undress AI" as it's sometimes called, refers to various artificial intelligence programs that started popping up around 2023. These tools, like "Unclothy AI" or "ClothOff," are built on deep learning models. They are, in essence, designed to take a picture and, using their smart algorithms, figure out where clothing is and then digitally remove it. It's a pretty complex process, yet it seems to make things very simple for the user.

The way it works is fairly straightforward for the person using the app. You upload an image, and the AI gets to work. It uses what it has learned from countless other images to create a new version of your photo, making it appear as though the person is not wearing clothes. This happens, actually, in just minutes. The idea is to generate very realistic nude images, and often, they are quite convincing. This quick and easy creation process is, in some respects, part of the problem.

These services often claim to be able to produce these altered images quickly. They leverage the latest in AI and deep learning to achieve what they do. However, it's very important to remember that these images are fake. They are not real pictures of the person, but rather a creation of the AI. The technology itself is powerful, but its misuse is what has caused so much alarm, and that, is that, it's a real concern for many people.

The Alarming Rise of AI Undress Apps

Reports suggest that millions of people are getting onto these harmful AI "nudify" websites. It's a big number, and it shows just how popular these tools have become. New analysis indicates that the sites running these services are making a lot of money, too, which is a bit troubling. They often rely on technology from US companies, which adds another layer to the discussion.

The rise of these "undress apps" has really sparked a lot of worry. Researchers have noted that apps and websites using AI to undress women in photos are becoming incredibly popular. This trend is, arguably, quite concerning because it means more and more people are being exposed to this kind of content, or worse, becoming victims of it. It's a situation that has grown very quickly, and it's something many people are trying to understand.

These apps are easily found online, sometimes in unofficial app stores, or through various social media channels. It's a bit like they're everywhere once you start looking. The ease of access, combined with the quick creation time, means that making a deepfake can be done in just a few moments. This speed and widespread availability are, in a way, fueling the problem and making it harder to control. It's a really difficult situation, to be honest.

The Real-World Impact on Victims

The consequences of these AI nudify apps are truly heartbreaking for the people affected. Tech-savvy teenagers, for instance, are reportedly using these apps to bully and embarrass their classmates and friends. Imagine having a fake nude image of yourself created and shared without your permission; it's a devastating experience. This kind of digital abuse can have deep psychological impacts on victims, causing immense distress and shame. It's a very serious form of harm, and it's happening more often.

These AI "nudify" apps, sometimes called "nudifier apps," specifically create fake nude images of teens. Learning how they work, understanding the risks, and knowing how to protect young people is so important. The psychological toll on victims is a major concern, and it's something that often gets overlooked in the broader conversation about AI. Reports of such abuse are, unfortunately, growing, which means more and more individuals are facing these terrible situations.

When someone's image is altered without their consent, it's a profound violation of their privacy and dignity. The feeling of helplessness can be overwhelming for victims. It's not just about a picture; it's about their reputation, their mental well-being, and their sense of safety. These apps exploit trust and create a harmful environment, especially for young people who are already vulnerable. It's a truly upsetting reality that we are seeing unfold, and it's something we need to address directly.

Legal and Ethical Questions

The rise of nudify apps brings up a lot of complicated legal and ethical questions. One of the biggest issues is the lack of consent. These apps use AI technology to change images, often making people appear nude without their permission. This act itself is a serious invasion of privacy. There are legal loopholes that some of these services try to exploit, making it harder to hold them accountable. This situation, you know, makes it very difficult for victims to seek justice.

There have been cases where AI 'nudify' sites are being sued for victimizing people. This shows that there's a growing push to fight against this deepfake abuse. Nicola Henry, for example, from The Conversation, has discussed how we can battle this kind of digital harm. It's a complex legal landscape, and it's still developing as technology moves so fast. The question of who is responsible, and how to enforce laws across different countries, is a real challenge.

Another major concern is the lack of transparency surrounding these nudify sites. A researcher looking into them found it very hard to tell who was running the sites or how people were paying for the images. This secrecy makes it even harder to track down and stop the perpetrators. The fact that a generative AI nudify service was found storing explicit deepfakes in an unprotected cloud database is also incredibly alarming. This lack of security means that very sensitive and harmful content could be easily accessed, which is, quite frankly, a huge risk to everyone involved.

Protecting Yourself and Your Loved Ones

Given the concerns surrounding nudify.ai and similar tools, taking steps to protect yourself and those you care about is very important. The first thing to remember is that these images are fake. If you or someone you know encounters such an image, it's crucial to understand it's a fabrication. Educating young people about how these apps work and the dangers they pose is a key step. Talk to them about digital consent and the importance of not sharing personal images online without thinking it through. Learn more about digital safety on our site, which can offer further guidance.

It's also a good idea to be careful about what photos you share publicly online. The less material available for these AI tools to potentially misuse, the better. Consider your privacy settings on social media platforms and other online services. Limiting who can see your photos can help reduce the risk. If you do find yourself or someone you know a victim of this kind of abuse, remember that you are not alone. There are resources and support available to help. You can find more information about reporting online abuse and getting help by visiting this page for support resources.

If you discover that a fake image of you or someone you know has been created or shared, it's important to act. Report the content to the platform where it's hosted. Many platforms have policies against non-consensual intimate imagery and deepfakes. Seeking legal advice is also an option, as lawsuits are being filed against these sites. While the fight against deepfake abuse is ongoing, staying informed and taking proactive measures can make a significant difference. It's about being aware and taking charge of your digital presence, which is, you know, something everyone should do.

A Call for Responsible AI Development

The problems with nudify.ai highlight a broader need for more responsible development and use of artificial intelligence. While AI offers incredible potential for good, tools that enable harm, especially non-consensual harm, need to be addressed seriously. It's not just about blocking apps; it's about the ethical principles guiding AI creation itself. Companies that develop AI models need to consider the potential for misuse and build safeguards into their technology from the very beginning. This includes, you know, making sure their tech isn't easily used for harmful purposes.

There's a growing push for stronger regulations and laws to keep up with the rapid pace of AI development. The current legal frameworks often lag behind the technology, creating loopholes that allow harmful apps to operate. Policymakers, tech companies, and the public need to work together to create a safer digital environment. This means, perhaps, having clearer rules about what AI can and cannot be used for, especially when it involves people's images and privacy. It's a big task, but it's one that's becoming more and more urgent as these issues become more common.

Ultimately, the goal is to foster an environment where AI serves humanity in positive ways, rather than being used to cause distress and harm. This involves ongoing dialogue, research, and collaborative efforts to understand the risks and build effective solutions. It's a continuous process, and it requires everyone's participation. The conversation around nudify.ai is, in a way, a wake-up call for the broader AI community to prioritize safety and ethics. We need to keep talking about this, actually, to make sure things get better.

Frequently Asked Questions About Nudify.ai

What are nudify AI apps?

Nudify AI apps are artificial intelligence programs that use deep learning to digitally remove clothing from images of individuals. They can, in a way, generate fake nude images from regular photos, often without the person's permission. These tools started becoming more visible around 2023, and they've raised a lot of concerns because of their ability to create very realistic, yet false, pictures.

Are nudify AI apps legal?

The legality of nudify AI apps is quite complex and varies by region. While the creation of such tools might exist in a legal gray area in some places, the non-consensual creation and sharing of intimate images, whether real or fake, is illegal in many jurisdictions. Lawsuits are being filed against these sites for victimizing people, which shows a growing legal pushback. It's a tricky area, and the laws are, frankly, still catching up to the technology.

How can I protect myself or my kids from nudify AI?

Protecting yourself and your children involves several steps. First, educate everyone about how these apps work and the fact that the images they create are fake. Be very careful about sharing personal photos online, especially on public platforms. Adjust privacy settings on social media accounts to limit who can see your images. If a fake image is created, report it to the platform where it's shared and consider seeking legal advice. Staying informed and having open conversations about digital safety are, arguably, your best defenses.

‘Nudify’ Apps That Use AI to ‘Undress’ Women in Photos Are Soaring in

Nudify AI Pricing, Reviews, Alternatives - AI Nudity

Nudify AI