Remote IoT Batch Job Example: Getting Data From Yesterday's Remote Devices

Getting information from far-off devices can sometimes feel like a puzzle, especially when you need to catch up on what happened a while ago. So, figuring out how to manage a remote IoT batch job example remote since yesterday since yesterday remote is a pretty common challenge for many folks working with connected gadgets. You see, these devices are often out in the wild, gathering all sorts of interesting bits of data, and sometimes, you just miss a beat or need to review a whole day's worth of activity all at once. It's a bit like trying to collect all the notes from a busy day after it's already over, but for machines.

This kind of task, getting information that has piled up, really does need a good plan. You might have sensors tucked away in places that are hard to reach, or perhaps your network connection isn't always perfect, you know? That makes pulling data in a steady stream a bit tricky. That's why understanding how to set up a batch job for remote IoT devices, specifically to grab data from yesterday, is actually quite helpful. It ensures you don't miss any important details, even if they're a little old.

We're going to talk all about how to handle this kind of data gathering. We'll look at what a batch job actually is, why "remote" makes things a little different, and what it means to fetch data that has been sitting there "since yesterday." This article will, in a way, walk you through the steps to make sure your remote IoT data is collected and processed, even if it's not happening right this second.

Table of Contents

- What is an IoT Batch Job, Anyway?

- Setting Up Your Remote IoT Batch Job

- A Practical Remote IoT Batch Job Example

- Addressing Common Challenges

- Making the Most of Historical IoT Data

- Frequently Asked Questions About Remote IoT Batch Jobs

- Final Thoughts on Your Remote IoT Data

What is an IoT Batch Job, Anyway?

An IoT batch job is, basically, a way to process a whole group of data points from connected devices all at once, rather than one by one as they arrive. Think of it like gathering all the mail from your mailbox at the end of the day instead of checking for each letter as it gets delivered. This approach is often chosen when you have a lot of data, or when the data doesn't need to be acted upon immediately, you know? It's a very efficient method for handling large amounts of information that builds up over time.

These jobs usually involve several steps. First, the data gets collected, often from many different sensors or gadgets. Then, it's stored somewhere temporarily. After that, a special program runs, taking all that stored data and doing something useful with it, like cleaning it up, analyzing trends, or generating reports. This entire process, from collection to analysis, is performed as a single, scheduled operation.

For instance, a batch job might collect all the temperature readings from a factory floor over an eight-hour shift. Then, it processes that entire chunk of data to figure out the average temperature, the highest temperature, and any unusual spikes. This kind of work is typically set to run at specific times, perhaps every night or once a week, making it quite predictable.

The "Remote" Element

Adding "remote" to the mix means these IoT devices are not right next to your processing system. They could be miles away, perhaps in another building, across a city, or even in a different country, you see? This distance brings with it a few extra things to think about, like how the data travels from the device to where it needs to be processed. Connectivity becomes a much bigger deal when things are far apart.

A remote setup often means relying on various network connections, which might not always be super stable or fast. You could be dealing with cellular networks, satellite connections, or even Wi-Fi in a large, spread-out area. Because of this, getting all the data back to a central point can sometimes take a bit longer or encounter interruptions. It's almost like trying to send a large package across the country; you need to make sure the delivery route is clear.

This distance also means that if something goes wrong with a device, you can't just walk over and fix it easily. You need systems in place that can handle issues from afar, perhaps by sending commands remotely or by having the device store data until a connection is available. It's a rather important part of managing these kinds of operations effectively.

Why "Since Yesterday" Matters

When we talk about data "since yesterday," we're specifically looking at historical information. This isn't about real-time updates happening right now; it's about collecting everything that happened in a defined past period, like the previous 24 hours. There are many reasons why you might need to do this, actually. Maybe a system was offline for a bit, and you need to catch up on the missed data.

Perhaps your analysis needs a full day's worth of data to spot trends or anomalies that wouldn't be clear from just a few hours of readings. For example, if you're monitoring energy consumption, you might want to see the total usage for the entire previous day to compare it with other days. This allows for a much broader view of operations.

The "since yesterday" aspect also implies that the data might be stored locally on the device or in a temporary buffer before being sent. This local storage acts as a safety net, ensuring that even if the network connection drops for a while, the data isn't lost. Then, when the batch job runs, it pulls all that accumulated information, making sure you get a complete picture. It's a pretty common requirement for many applications.

Setting Up Your Remote IoT Batch Job

Getting a remote IoT batch job ready involves a few key pieces working together. You need to think about how your devices gather their information, where that information goes, and what tools you'll use to process it all. It's a bit like preparing for a big cooking project; you need all your ingredients and equipment lined up before you start.

The goal is to create a system that reliably pulls data from your distant devices, even if there are occasional hiccups, and then processes it efficiently. This means choosing the right technologies and setting up the workflows correctly. A good setup will save you a lot of headaches later on, you know? It really does make a difference.

We'll break down the main components you'll typically need to consider when putting together such a system. From the very start of data collection to the final steps of analysis, each part plays an important role in making your batch job a success.

Data Collection and Storage

The first step in any IoT batch job is getting the data from the devices themselves. Remote devices often use specific communication protocols to send their readings, like MQTT or HTTP, over various network types. They might send data to a central cloud platform or a local gateway first. This initial collection point is absolutely crucial for gathering everything.

Once the data leaves the device, it needs a place to land and wait for processing. This is where storage comes in. For batch jobs, you're usually looking for a storage solution that can handle a lot of incoming data quickly and reliably. This could be a message queue, a data lake, or a time-series database. The important thing is that it can hold all the data from "yesterday" until your batch job is ready to pick it up.

Many cloud providers offer services specifically for this, allowing devices to easily send their data to a scalable storage solution. This way, you don't have to worry about running out of space or losing data if there's a sudden burst of information. It makes managing the raw input much simpler, actually.

The Batch Processing Engine

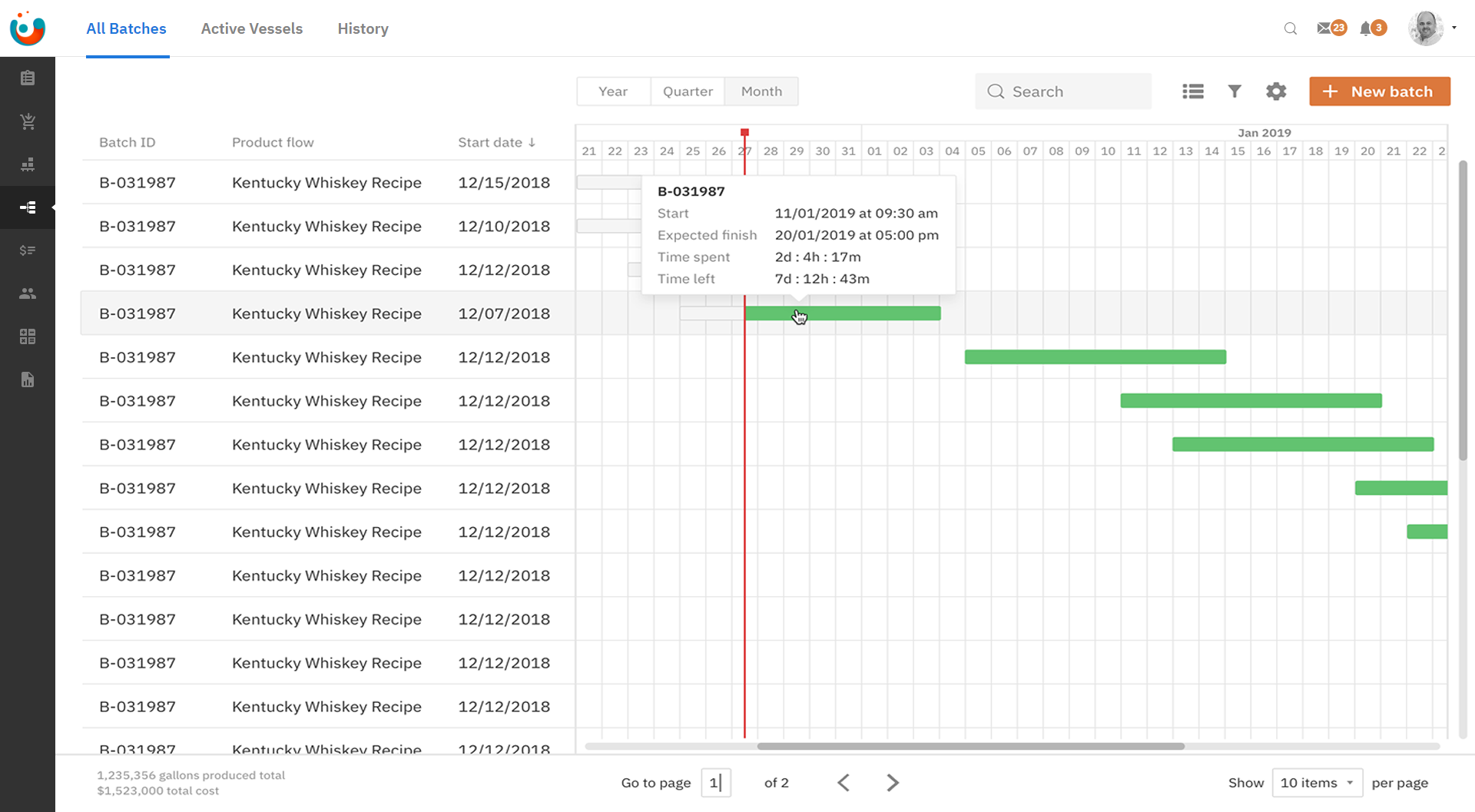

After the data is collected and stored, you need something to actually do the work of processing it. This is your batch processing engine. These are software tools or services designed to take large datasets and perform operations on them, like filtering, aggregating, transforming, or analyzing. Apache Spark, Hadoop MapReduce, or cloud-native batch services are common examples.

The engine reads the data from your chosen storage location, applies the logic you've defined, and then usually writes the results to another storage area, perhaps a database optimized for queries or a reporting dashboard. The logic you define tells the engine exactly what to do with each piece of data or with the dataset as a whole. It's very much like a recipe for your data.

Choosing the right engine depends on the amount of data you have, the complexity of your processing, and your budget. Some engines are better for extremely large datasets, while others are great for more moderate volumes with complex calculations. This choice is, you know, a pretty big deal for performance.

Scheduling and Triggering

A batch job doesn't just run on its own; it needs to be told when to start. This is where scheduling and triggering come into play. For a "since yesterday" job, you'll typically want it to run once a day, perhaps in the early morning hours when system load might be lower. You can use tools like cron jobs on a server, or managed scheduling services provided by cloud platforms.

The scheduler will simply initiate the batch processing engine at the predetermined time. It's a bit like setting an alarm clock for your data processing. This ensures that the job consistently runs and processes the new "yesterday's" data without you having to manually start it every single time. It brings a lot of automation to the process.

Sometimes, a batch job might also be triggered by an event, like a certain amount of data accumulating in storage, rather than just a time. However, for daily historical data, a time-based schedule is usually the most straightforward approach. This setup, you know, makes operations much smoother.

A Practical Remote IoT Batch Job Example

Let's walk through a concrete example to make this whole concept a bit clearer. Imagining a real-world situation can help connect all the pieces we've talked about so far. This example will show how all the components of a remote IoT batch job come together to collect and process data that has been accumulating for a day.

We'll consider a common scenario that many businesses face. This kind of example really does highlight the practical application of these technical ideas. It’s important to see how these systems work in action, even if it's just a conceptual run-through.

Scenario: Temperature Sensors in a Warehouse

Picture a large, remote warehouse filled with goods that need to be kept at a specific temperature. Throughout this warehouse, there are dozens of small IoT temperature sensors. These sensors are battery-powered and connect to the internet using a low-power wireless network, perhaps LoRaWAN or NB-IoT, sending their readings every 15 minutes.

The data from these sensors goes to a cloud-based IoT platform. However, the business doesn't need to see every single temperature reading in real-time. What they really need is a daily report that shows the average temperature in each zone of the warehouse for the entire previous day, along with any times the temperature went outside the acceptable range. This daily summary is what they use for compliance and operational checks.

Because the warehouse is remote, and connectivity can sometimes be a little spotty, the sensors are designed to store a few hours of data locally if they can't connect. When the connection is restored, they send all their buffered data in a burst. This means the cloud platform might receive data out of order or in large chunks, making a batch job perfect for processing it consistently.

Step-by-Step Data Flow

**Data Generation (Devices):** Throughout yesterday, each temperature sensor in the remote warehouse took a reading every 15 minutes. These readings include the temperature value, a timestamp, and the sensor's unique ID. If a sensor temporarily lost its network connection, it held onto these readings.

**Data Transmission (Network):** When connected, the sensors send their readings to a cloud IoT hub. This hub is specifically designed to receive messages from many devices. The data might arrive continuously or in bursts if sensors were offline.

**Initial Storage (Cloud Data Lake):** The cloud IoT hub forwards all incoming temperature data to a scalable storage solution, like a data lake. Here, all of yesterday's raw temperature readings from every sensor are stored, you know, just as they arrive. Each piece of data is tagged with its original timestamp and the time it was received.

**Batch Job Trigger (Scheduler):** At 3:00 AM today, a scheduled job (perhaps a cloud function or a managed batch service) is automatically triggered. Its purpose is to process all the temperature data collected from yesterday.

**Data Retrieval (Batch Engine):** The batch processing engine starts up. It queries the data lake, specifically looking for all temperature readings that have timestamps from yesterday's date (e.g., from 00:00:00 to 23:59:59 yesterday). This ensures it only works with the relevant historical data.

**Data Processing (Batch Engine):** The engine then performs several tasks on this collected data:

**Filtering:** It might remove any duplicate readings or obviously faulty sensor values.

**Aggregation:** For each warehouse zone, it calculates the average temperature for the entire day.

**Analysis:** It identifies any specific timestamps and sensor IDs where the temperature went above or below the acceptable range (e.g., too hot or too cold).

**Transformation:** It might format the data into a more readable report structure.

**Result Storage (Reporting Database):** The processed results – the daily averages per zone, and a list of temperature excursions – are then stored in a different database, one optimized for quick reporting and dashboard display.

**Reporting and Alerts:** Business users can now access a dashboard or receive an email report showing yesterday's temperature summary. If any critical temperature excursions were found, alerts can be sent to the operations team, you know, immediately.

This example clearly shows how a remote IoT batch job example remote since yesterday since yesterday remote helps manage and make sense of historical data from far-off devices, ensuring important insights aren't missed.

Addressing Common Challenges

Working with remote IoT devices and batch jobs isn't always smooth sailing. There are a few hurdles that commonly pop up, and knowing about them beforehand can really help you prepare. It's a bit like knowing the tricky spots on a hiking trail; you can pack the right gear and plan your steps carefully.

These challenges often relate to the "remote" nature of the devices and the sheer volume of data involved. But for most of these issues, there are pretty good ways to handle them. We'll look at some of the more frequent problems and, you know, talk about how to get around them.

Connectivity Concerns

One of the biggest issues with remote IoT is, quite naturally, maintaining a steady connection. Devices might be in areas with poor cellular coverage, or their Wi-Fi might drop occasionally. This can lead to data delays or even lost readings if not handled properly. It's a rather common problem, actually.

To combat this, devices should have local storage capabilities. This means they can save data points when the network is down and then transmit them all at once when the connection is restored. This is often called "store and forward." Additionally, using robust communication protocols that can handle intermittent connections helps a lot.

Also, having monitoring in place to alert you when devices go offline for extended periods is very helpful. This allows you to investigate connectivity issues quickly, you know, before too much data piles up.

Data Volume and Performance

IoT devices can generate a tremendous amount of data, especially when you're collecting it from many sensors over a whole day. Processing all of "yesterday's" data in a batch job can be resource-intensive and take a long time if your system isn't set up to handle it efficiently. It's a bit like trying to empty a swimming pool with a teacup.

Using scalable cloud services for both storage and processing is a great way to manage this. These services can automatically adjust their capacity based on how much data you have, so you only pay for what you use. Techniques like data compression before transmission and using efficient data formats can also reduce the load.

Furthermore, optimizing your batch processing code to be as efficient as possible is key. This means writing queries that run quickly and choosing algorithms that scale well with large datasets. It really does make a difference to the overall speed.

Ensuring Data Accuracy and Integrity

With data coming from remote locations and potentially over unreliable networks, there's always a risk of errors, corruption, or missing data points. Ensuring that the data you're processing is accurate and complete is paramount for making good decisions. You want to trust the information you're getting, you know?

Implementing data validation checks at various stages is crucial. This includes checks on the device itself, upon reception at the IoT hub, and again within the batch processing engine. You might check for expected data ranges, correct data types, and consistent timestamps. Any data that looks suspicious should be flagged or corrected.

Using checksums or other data integrity checks during transmission can also help ensure that the data received is the same as the data sent. Having a clear strategy for handling missing data, such as interpolation or flagging gaps, is also very important for maintaining a reliable dataset.

Security for Remote Operations

Remote IoT devices can be vulnerable points in a network if not secured properly. Protecting the data as it travels from the device to your processing system, and ensuring that only authorized entities can access it, is absolutely critical. This is a rather big concern for any connected system.

Encryption should be used for all data transmission, both from the device to the cloud and between different components of your processing system. Authentication mechanisms should be in place to verify the identity of devices and users trying to access the data or the batch job. This means strong passwords, API keys, or certificates.

Regular security audits and updates for device firmware and software components are also essential. It's about creating layers of protection to keep your remote IoT data safe from unauthorized access or tampering. You know, it's a constant effort to stay secure.

Making the Most of Historical IoT Data

Once you've successfully run your remote IoT batch job example remote since yesterday since yesterday remote, you have a treasure trove of historical data. This information is incredibly valuable, offering insights that real-time data alone cannot provide. It's not just about what's happening now, but also about understanding patterns

Mastering Remote IoT Batch Job Execution In AWS

How To Optimize RemoteIoT Batch Job Example Remote Remote For Your Workflow

Remoteiot Batch Job Example Remote Aws Developing A Monitoring